Linux duplicate files finder how to#

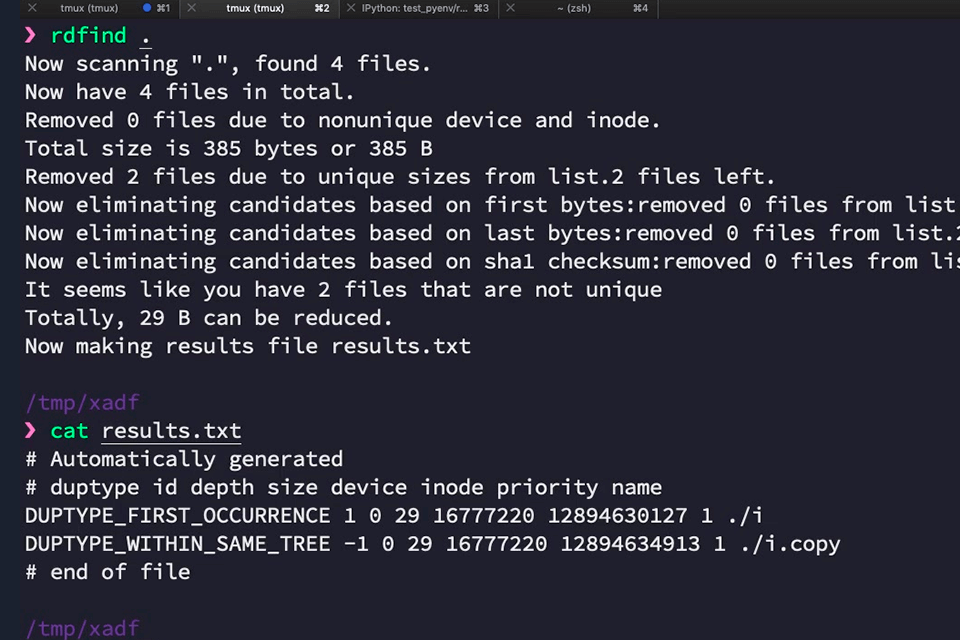

How to remove a component of absolute path?.Today When I was looking to the file compression of btrfs I found fdupes again and run in my test system. But after a time I change my OS to windows and again to Linux. A shell script to fetch / find duplicate records: Hi, long time age when I was new comer to the Linux world I was using duplicate file finder named fdupes. If present, the record is printed since its a duplicate record, else array is updated with this record.ĥ. This method is almost same as the earlier awk, the only thing being hashes being used here.Įvery time a record is being read, it is being searched in the array "arr". uniq command without the "-d" option will delete the duplicate records. sort command is used since the uniq command works only on sorted files. The image below shows a bunch of duplicate pdf files in my Downloads directory: You can change the advanced search parameters to search by file type and restrict yourself to images only. If the images are not identical, they wont be flagged as duplicates. Uniq command has an option "-d" which lists out only the duplicate records. fslint is a graphical program that can find duplicate files of any type by md5sum. Let us now see the different ways to find the duplicate record. Kdiff3 is one of three free tools in the Linux Mint Software Center that uses a graphical interface to help you select, compare and remove duplicate files one by one. Let us consider a file with the following contents. In this article, we will review how to use a free tool called Kdiff3 to find and remove duplicate files in Linux Mint.

How to find the duplicate records / lines from a file in Linux?

0 kommentar(er)

0 kommentar(er)